Can a knowledge graph change the way we build and maintain large codebases?

Andrej Karpathy inspired the idea that structured graphs can make AI navigation far more efficient. We now can graphify with Claude to turn our entire project into a living map of facts, files, and relations.

This approach uses a powerful coding assistant to keep our code and data organized. It prevents the common pitfalls of stateless AI sessions by storing context in a clear knowledge graph.

In this guide, we show how to query the codebase, speed up coding tasks, and keep an overview of our project. We want to help you spend less time searching and more time building reliable software.

Key Takeaways

- We can convert a codebase into a structured graph for clearer insights.

- A persistent assistant reduces lost context across sessions.

- Effective query tools make coding faster and less error-prone.

- Using a coding assistant streamlines project organization.

- The workflow improves access to key data and speeds development.

Understanding the Token Efficiency Challenge

Orienting a model to a sprawling codebase eats tokens before any useful analysis begins.

When we open a session, the assistant often spends most of its budget just learning our project layout. In large repositories the initial orientation cost dominates token usage.

For projects that exceed 500 files, the repeated act of reading raw files becomes expensive. Every time we start a new session, the model may re-scan many files to regain context.

The Cost of Orientation

Orientation can account for more token burn than the actual coding work. That overhead compounds over time and raises operating expenses for teams.

The Problem with Stateless Sessions

Stateless sessions force us to pay for the same context repeatedly. We waste tokens and time when the model re-reads raw files instead of using a persistent map.

- In big codebases, claude code often consumes tokens just to orient itself.

- Reading raw files repeatedly inflates token usage and costs.

- Reducing repeated reads can cut token usage by up to 70x on large projects.

| Repository Size | Typical Orientation Cost | Token Savings with Persistent Map |

|---|---|---|

| 100 files | Low–Moderate | 2–5x |

| 500 files | High | 10–50x |

| 1,000+ files | Very High | 50–70x |

Why We Should Graphify with Claude

We turn scattered research notes, PDFs, and code into a single map that our AI can follow. This system acts as an independent organizer for our files and ideas.

The result is a usable knowledge graph that links facts, code, and plain documents. Our assistant no longer scans blindly. Instead it walks a pre-computed map to find relevant nodes.

That change matters when we ask a hard question. Answers come from explicit relationships, not just surface similarity. We store context persistently, so the model builds on prior insights.

- Faster navigation across diverse documents and pdfs.

- Grounded answers rooted in our project data.

- Persistent knowledge that grows over time.

| Feature | Benefit | Best For |

|---|---|---|

| Pre-computed graph | Predictable navigation | Large codebases |

| Persistent system state | Builds on prior work | Research collections |

| Document and PDF indexing | Context-rich answers | Mixed-format projects |

To explore how tools can support teaching or research workflows, see online tools for teaching.

Getting Started with the Installation Process

We start by installing the mapping tools on our machine. A few commands in the terminal prepare the assistant to parse our codebase and index files automatically.

Platform Specific Commands

Run the standard install sequence to add the required tools:

pip install graphifyy && graphify install

After the install completes, point the tool at your project folder. The tool scans files and code, then builds the initial graph structure.

- The CLI provides simple commands to manage the map and update nodes.

- The setup is compatible with claude code and other dev environments.

- Ensure the assistant is configured to recognize the new system and output files.

Verify the install by checking that the system lists your top-level folders and creates output JSON and HTML reports.

| Step | Command | Expected Output |

|---|---|---|

| Install tools | pip install graphifyy | Packages installed |

| Initialize | graphify install | Graph baseline created |

| Point to project | graphify scan ./my-folder | Index of files and code |

Mapping Your Project Architecture

We begin by tracing how files and modules connect to reveal the true shape of our codebase.

First, we identify core relationships and label key nodes. This gives us a clear view of the project and how pieces communicate.

Next, we visualize connections so we can spot highly connected components. A simple map shows hubs, weak links, and areas that need refactoring.

Documenting these links makes the system queryable. We can ask targeted questions and get answers rooted in our actual structure.

- See which modules are most coupled and where to simplify.

- Detect potential bottlenecks before they affect scaling.

- Create a shared reference so all team members agree on the design.

| Focus | Benefit | Action |

|---|---|---|

| Connections | Faster debugging | Map edges |

| Hubs | Prioritize tests | Analyze coupling |

| Documentation | Team alignment | Publish graph |

Mapping our architecture helps us make better choices about future design. It keeps the team aligned and the codebase healthier over time.

Exploring the Generated Output Files

After a scan, the project produces a small set of files that together capture structure and context. These outputs let us move from raw code to fast, repeatable exploration.

Interactive HTML Visualizations

Open graph.html to view an interactive map of nodes and links. The page shows how modules, functions, and concepts connect.

We can zoom, search, and highlight hubs. That makes it easy to spot key areas and navigate the codebase visually.

Understanding the Report

The GRAPH_REPORT.md file summarizes top connections and suggested questions. It gives quick context so we know where to dig next.

- High-level summaries for fast reviews.

- Suggested queries to test assumptions.

- Notes on nodes that need attention.

Persistent JSON Data

graph.json stores the persistent data we query. Relying on this file saves us from re-reading every source file during each session.

The cache/ directory stores incremental results so we only reprocess changed files. That reduces CPU time and speeds updates.

| Output | Purpose | Benefit |

|---|---|---|

| graph.html | Interactive visualization | Fast exploration |

| GRAPH_REPORT.md | Summary & questions | Quick understanding |

| graph.json | Persistent data | Efficient queries |

Leveraging Tree-Sitter for Local Code Analysis

By parsing syntax trees on our machines, we capture precise program structure fast.

We use Tree‑Sitter to extract the ast of each file locally. This gives us a clear list of classes, functions, and imports without any network calls.

Keeping analysis on our device protects sensitive code and keeps repository data private. The parser runs quickly and scales to large folders.

Tree‑Sitter identifies functions and classes reliably. That lets us build a precise map of symbols and relations before any natural language step.

- Local parsing: no external APIs, lower risk.

- Accurate extraction: functions, classes, imports.

- Fast results: high throughput for large codebases.

| Aspect | Benefit | Why it matters |

|---|---|---|

| AST extraction | Deterministic output | Trustworthy structure |

| Local runtime | Data stays on disk | Improved security |

| Language support | Many grammars | Broad coding coverage |

We trust Tree‑Sitter to feed the graph with factual structure rather than inferred guesses. That makes our system both fast and reliable.

How Community Detection Enhances Data Relationships

By clustering nodes based on topology, we expose meaningful relationships across the codebase.

We use Leiden community detection to group related concepts inside the project graph without relying on a vector database. This method exploits structural links instead of text similarity.

That focus on topology helps us find hidden connections and the true relationship between modules. We can see which parts form tight neighborhoods and which sit on the boundary.

- Discover clusters of related files and code that reflect real design intent.

- Reveal non-obvious relationships that standard search misses.

- Organize the project by structural proximity for clearer navigation and maintenance.

Exploring these communities gives us deeper insights and helps keep the project clean over time. The result is faster onboarding, smarter refactoring, and a more resilient codebase.

Managing Confidence Tags for Better Accuracy

Every link in our map carries a tag that reveals how confident we are about that connection.

We mark each relationship as EXTRACTED, INFERRED, or AMBIGUOUS. That label tells us whether the data came directly from code, was inferred by heuristics, or needs human review.

This transparency improves trust in the graph. When a relationship looks questionable, we can quickly find its tag and decide the next step.

In practice, we run periodic checks on AMBIGUOUS edges. Manual reviews and small tests resolve uncertain links. That keeps our system reliable and our assistant grounded on accurate facts.

- EXTRACTED: factual, low review cost.

- INFERRED: probable, needs spot checks.

- AMBIGUOUS: flag for manual validation.

| Tag | Meaning | Recommended Action |

|---|---|---|

| EXTRACTED | Directly parsed from source | Accept; add to cache |

| INFERRED | Derived by heuristics or ML | Spot-check during reviews |

| AMBIGUOUS | Unclear origin or low confidence | Schedule manual validation |

Querying Your Knowledge Graph Effectively

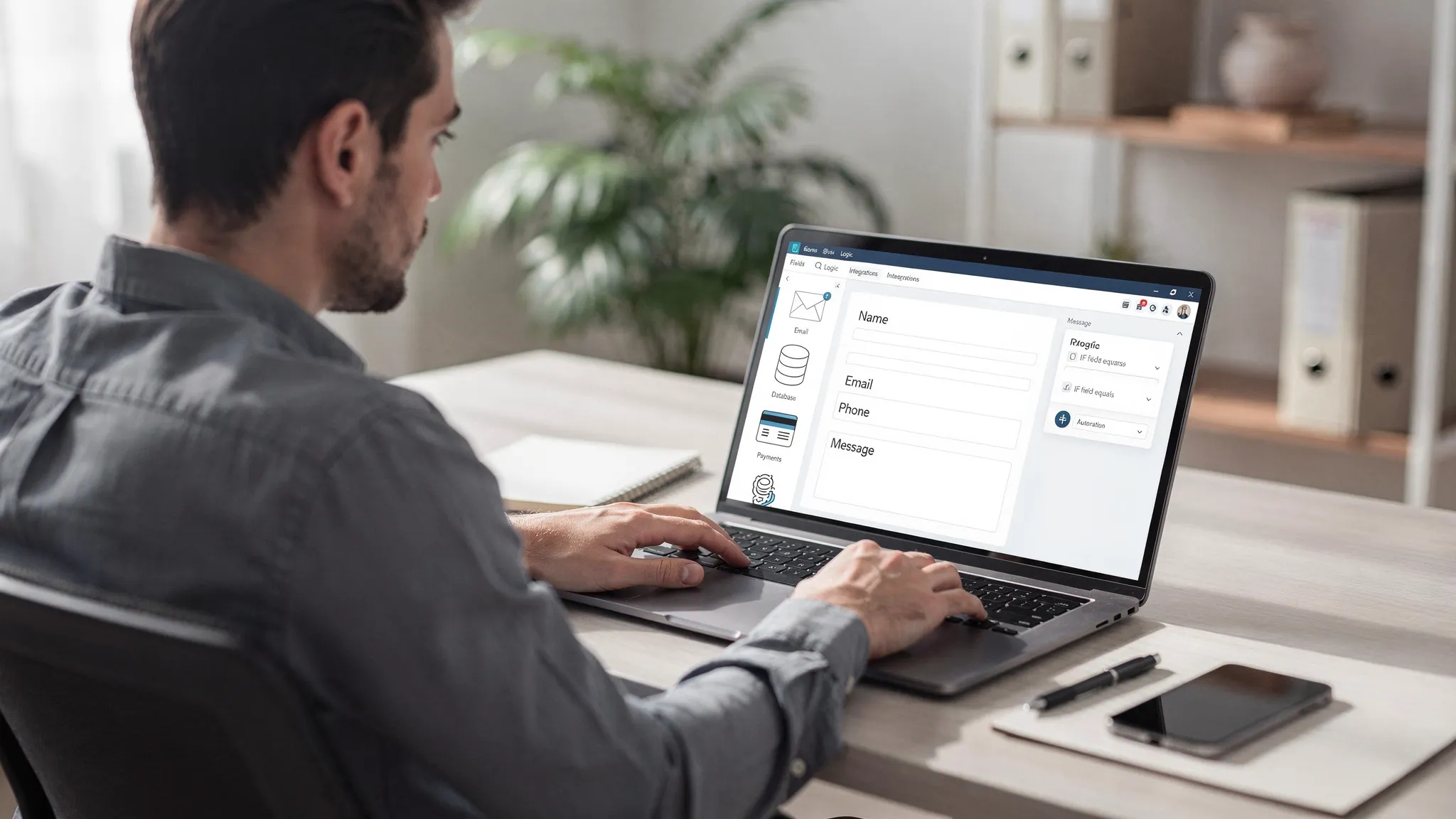

We make the map actionable by asking clear, focused questions. A few simple commands turn the graph into direct answers so we do not scan every file or waste time on irrelevant data.

Using Natural Language Queries

We speak to the system in plain language. Natural language queries let us find specific functions, design intents, or ownership notes without reading raw files.

graphify query commands accept conversational prompts and return node lists, edge types, and a confidence tag for each result.

Navigating Subgraphs

When a topic is large, we limit scope by exploring a subgraph. The –budget flag caps the token output so we only send relevant context to the assistant.

That keeps costs down and makes answers sharper. We can step through neighbors, inspect connection types, and trace actual paths across the graph.

- Use short, specific prompts to get precise nodes.

- Apply –budget to reduce tokens and speed responses.

- Inspect confidence labels before acting on inferred links.

| Action | Command | Benefit |

|---|---|---|

| Find ownership | graphify query “who owns X” | Direct answer without scanning raw files |

| Trace dependency | graphify query –subgraph “moduleA -> moduleB” | Focused path with confidence tags |

| Limit output | graphify query –budget 200 “explain X” | Lower token use and faster replies |

Automating Workflows with Git Hooks

We keep our project map current by baking updates into the Git lifecycle. Run graphify hook install once and the repository starts updating automatically on commits and branch switches.

The installed hook triggers an ast rebuild whenever we commit. That means our graph reflects the latest code without manual steps. We no longer pause coding to refresh the map.

Integration with claude code makes the assistant use the fresh graph for faster answers. The background process stays quiet. We focus on features, not on syncing data.

- Automatic rebuilds: keep the map synchronized after each commit.

- Branch-aware updates: switch branches and the graph follows.

- Manage hooks: enable, disable, or customize when updates run.

Hooks reduce the risk of stale context and make collaborative work safer. They give us confidence that queries and analyses use current project facts.

| Hook | Trigger | Effect |

|---|---|---|

| pre-commit | Commit | AST rebuild; quick validation |

| post-checkout | Branch switch | Project map sync |

| manual | Run command | On-demand update |

Integrating with MCP Servers for Persistent Access

A local MCP endpoint lets our assistant query project facts without reloading files.

We start the server using a single command: python -m graphify.serve graphify-out/graph.json. That process exposes the indexed graph as a stable API the assistant can call repeatedly.

Persistent Access

This mode makes repeated tool-call queries fast and deterministic. The server listens for commands such as query_graph or get_neighbors. Each call returns structured nodes, edges, and confidence tags so we do not resend raw files.

We plug the MCP into our existing auth and model stacks. That lets our system respect current credentials and routing. The setup is simple to script and fits CI workflows.

- Persistent API reduces token use and speeds answers.

- Direct graph access improves reasoning over project data.

- Seamless auth integration keeps access secure.

| Action | Command | Benefit |

|---|---|---|

| Start server | python -m graphify.serve graphify-out/graph.json | Persistent access |

| Query | query_graph / get_neighbors | Structured responses |

| Integrate | Auth + model config | Secure, consistent mode |

Supporting Diverse File Types and Formats

We index not just source files but also slides, spreadsheets, and design assets for full context. That lets us treat every project artifact as searchable project data.

When optional dependencies are installed, the system parses code across 25+ languages. It also extracts text from PDFs and Microsoft Office documents.

We include images and media so design work and charts live alongside code. This gives the assistant a richer view of the project and reduces blind spots.

Handling Office and Media Files

- We support a wide range of files, including code in many languages, PDFs, and images.

- Office documents and media files become searchable nodes in our map for better context.

- The system is flexible: add new file types as the project grows.

| Format | What We Extract | Benefit |

|---|---|---|

| Code (25+ languages) | ASTs, symbols | Precise structure and links |

| PDF / Office | Text, metadata | Documented decisions and specs |

| Images / Media | Alt text, captions | Design context and visuals |

Our approach keeps auth and access controls intact, so sensitive files remain protected while still contributing to a unified knowledge graph.

Advanced Configuration for Large Codebases

For massive repositories, small tweaks to our config make extraction run far smoother.

We use flags like –max-workers to control parallel parsing across many files. That helps balance CPU and memory so the process stays stable on large codebases.

Setting a –token-budget or similar cap keeps the model from reading too much context. This reduces token usage and keeps responses tight.

We switch the mode between deep and fast passes depending on priorities. For mixed languages and heavy repositories, we favor incremental scans and cache outputs to speed updates.

- Limit workers for low-memory hosts.

- Set token budgets to protect quotas.

- Use incremental mode for frequent commits.

| Setting | Purpose | Typical Value |

|---|---|---|

| –max-workers | Parallel file parsing | 4–16 (depends on CPU) |

| –token-budget | Limit model tokens per run | 200–1000 tokens |

| mode | Choose deep or fast extraction | incremental / full |

Maintaining Your Graph with Update Commands

We keep our project map current by running lightweight update commands that target only changed files. This approach stops us from rebuilding the entire graph each time.

Use the –update flag to re-extract only the files that changed since the last run. The command reads the cache and refreshes nodes quickly.

Because we rely on the cache, updates are fast and save us a lot of time every time we push changes. Our assistant stays aligned to the latest facts in the current session.

- Fast updates: reprocess only changed files.

- Low overhead: cache reduces CPU and IO work.

- Reliable state: session queries reflect current project data.

| Action | Scope | Typical Duration |

|---|---|---|

| Update (–update) | Changed files only | Seconds to minutes |

| Full rebuild | All files in repo | Minutes to hours |

| Cache clear + rebuild | All files, fresh state | Depends on size; longest |

Run the update command as part of commits or CI to keep the graph useful. For more on automating codebase mapping and practical tips, see our guide to the codebase toolset and a troubleshooting note on analytics updates.

Graphify codebase guide · Analytics update tips

Scaling Insights Across Multiple Projects

A global graph lets us connect separate projects and surface links that span repositories.

We register each project graph into a shared registry so the assistant can answer questions across teams. This lets us trace ownership, dependencies, and reuse without opening many files manually.

By combining graphs we build a unified view of our organization. We keep each project’s index but join them into a single queryable map. That makes cross-repo searches fast and reliable.

- Broader visibility: queries return nodes across several codebase instances.

- Organized files: consistent indexing keeps artifacts easy to find.

- Actionable links: cross-project edges reveal refactor and risk opportunities.

Our system scales: we add or remove project graphs and the central graph updates incrementally. That keeps data fresh and helps teams maintain quality across every codebase.

| Scale | Benefit | Action |

|---|---|---|

| Multiple repos | Unified insights | Register graphs |

| Shared index | Faster triage | Query global graph |

| Incremental updates | Low overhead | Sync changed files |

Transforming How We Interact with Our Data

Transforming how we interact with our data means we no longer treat files as transient inputs. Instead, we build a living knowledge layer that the model can query for reliable context.

By combining PDFs, images, code, and metadata into a single graph, we answer complex questions about architecture, auth flows, and design choices fast. The system keeps relationships explicit, so each query returns clear facts rather than guesses.

This approach reduces wasted tokens and time in repeated sessions. It makes our team more productive and helps us ship higher-quality software with less friction.