Have you ever wondered how a local index can turn messy notes into an instant, reliable resource that speeds up every task?

We use qmd with claude to turn our files into a fast, on-device search engine. This gives our team immediate access to meeting transcripts, markdown notes, and docs during every coding session.

By pairing the index with the claude code skill, we automate transcript conversion into searchable markdown. That keeps our local knowledge fresh and easy to find.

These tools help us keep a seamless link between documentation and AI agents. Every search returns precise snippets, and our developers stay focused on code, not hunting for context.

In short, this workflow preserves privacy, maintains speed, and boosts productivity across sessions. We rely on these techniques to keep our work efficient and well-informed.

Key Takeaways

- We index local notes to make fast, private searches during development sessions.

- The claude code skill converts transcripts into searchable markdown automatically.

- Integrating these tools keeps documentation synced with AI agents.

- Every search retrieves precise information to speed up decision-making.

- This setup boosts productivity without sacrificing privacy or speed.

Understanding the Power of QMD with Claude

A layered retrieval approach makes our local archive behave like a powerful, private search engine. We combine classic keyword matches and modern semantic methods to deliver fast, relevant results.

At the core, the system blends bm25 full-text lookup, vector similarity, and llm reranking. That trio turns noisy inputs into ranked, useful snippets for any query.

We keep our knowledge organized as a collection of documents and context tags. This structure helps the model form better answers and prevents irrelevant matches.

- We generate local embeddings using node-llama-cpp so semantic search stays fast and private.

- Hybrid query runs expand terms and apply reranking for precision.

- Thousands of indexed notes mean relevant facts are ready whenever we need them.

Getting Started with Installation and Setup

A smooth setup saves time later; here’s how we get the MCP server and CLI working on your machine.

Using Bun for Installation

We prefer Bun because it installs fast and runs reliably for local tools. To install the package globally run:

bun install -g @tobilu/qmd

This installs the qmd binary system-wide so every user on the machine can call the CLI. It also reduces startup delays compared to some Node.js setups.

Verifying Your Environment

After installation, we verify that the MCP server is visible to the shell. Run the CLI help and a quick status check to confirm paths and links.

- Check the binary path and ensure it is linked in your PATH.

- Start a test session and confirm the server registers without errors.

- Answer common setup questions by inspecting installation logs and permissions.

Finally, we recommend installing the Claude Code skill to automate parts of the setup. That step saves time when initializing new projects and keeps sessions stable.

Organizing Your Knowledge into Collections

We split our knowledge into clear collections so finding the right document is fast. A simple structure keeps our team focused and helps tools like qmd map content quickly.

We group markdown, meeting notes, and project docs into named collections. Each group holds similar files and gets its own index. That lets us run a targeted search against one category instead of scanning everything.

Every collection includes short metadata entries that add useful context. Those tags improve relevance and guide retrieval toward the right documents.

- Index collections individually to speed queries and reduce noise.

- Keep the index fresh by adding recent transcripts and project updates.

- Group related files so AI agents access only pertinent content.

Maintaining tidy collections is the foundation of good knowledge work. When collections stay organized, we find answers faster and keep our documentation reliable.

Enhancing Search Accuracy with Contextual Data

We improve result relevance by attaching clear, human-readable context to every virtual path. This small step guides the model and makes responses more precise for day-to-day work.

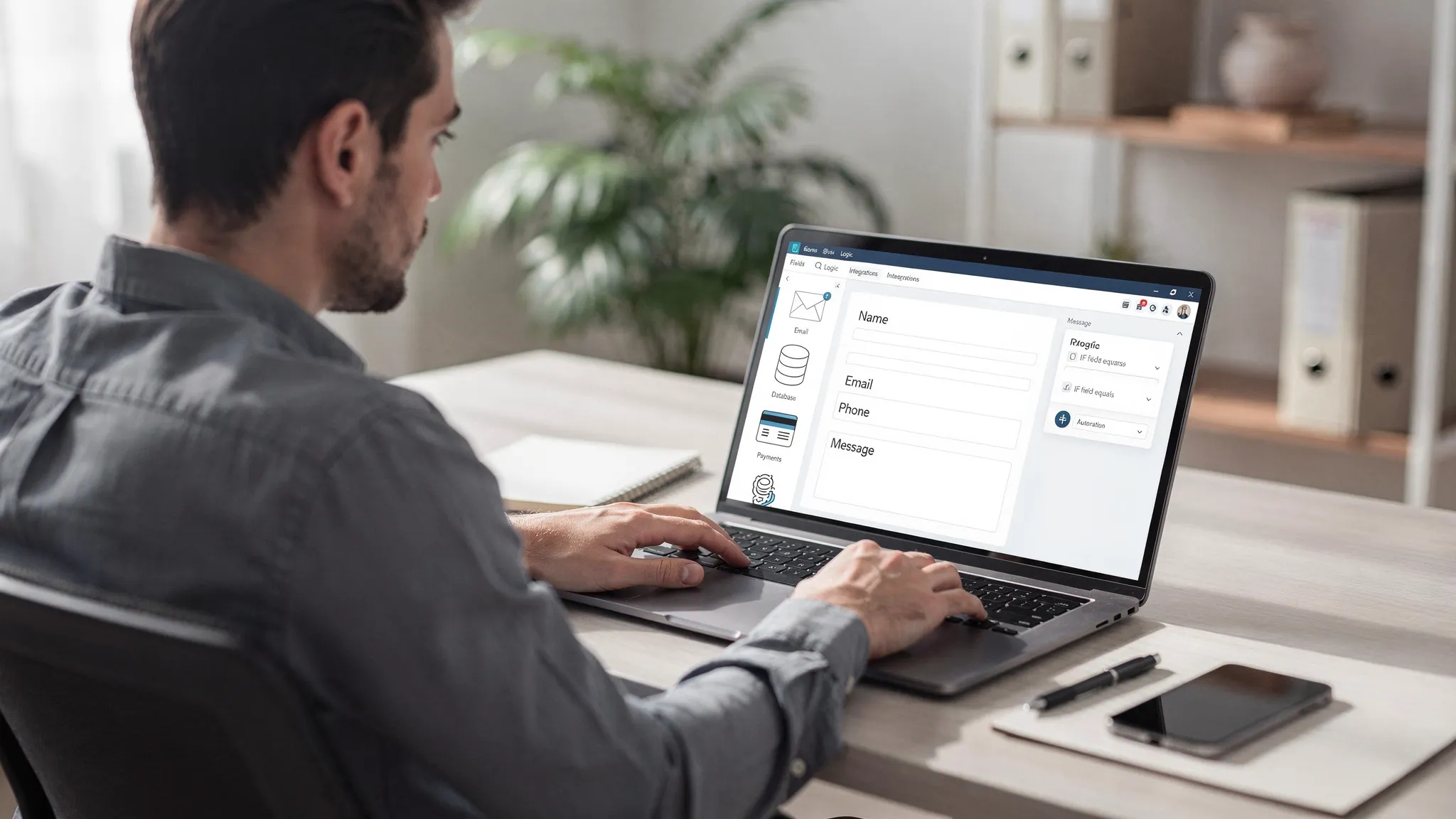

Adding Context to Virtual Paths

When we label a file or folder, the LLM better understands the underlying content. That clarity reduces noise and helps the agent pick the right document on the first pass.

- We use a simple command to add context tags to files and directories so a query returns focused hits.

- Labeling documents helps the system distinguish meeting notes, code snippets, and specs.

- Maintaining a hierarchy of context ensures documents stay organized and retrieval stays fast.

- As a result, our team spends less time filtering irrelevant documents during complex searches.

We also keep one explicit mention of tool names for integration. For example, qmd reads context labels and maps them into the index so our search is both private and accurate.

Mastering Search and Retrieval Commands

Fast, accurate results come from combining classic keyword lookups with modern semantic tools.

Keyword Search with BM25

We use the keyword search for quick lookups that return exact matches from a collection or file. This command helps when we know a specific keyword or phrase to find.

Vector Similarity Search

For nuanced queries, we run a vector pass that uses embeddings to surface semantically related documents and docs. Vector search finds related content even when wording differs.

Hybrid Query Expansion

Our go-to is the qmd query hybrid mode. It runs BM25, vector similarity, and LLM reranking so results rank by true relevance.

We rely on reranking and embeddings to prioritize the best snippets. When needed, we add the –full flag to view the entire document or file.

- Use keyword search for exact matches and speed.

- Use vector for semantic recall across docs.

- Use hybrid queries to combine tools, reduce noise, and surface top results fast.

Integrating QMD into Your Agentic Workflow

We tie our local index into agent flows so every coding session leaves a searchable trail.

The claude code skill lives at ~/.claude/skills/qmd-sessions/. It hooks into our tooling and updates the index automatically at the end of each session.

By connecting the mcp server to our agents, they can perform a real-time search across local files and docs. Agents call the query command to fetch recent context before starting a task.

We configured the environment so every session is indexed. This lets agents learn from past work and suggest better fixes or code snippets during a new session.

- Automatic indexing via the skill keeps history current.

- Agents query session logs and file metadata for precise context.

- The MCP architecture ensures smooth data flow between our tools and agents.

As a result, our agents are more capable and context-aware. The integration changed how we interact with AI during development and made each session more productive.

Configuring the MCP Server for Seamless Interaction

We make the MCP server simple to run so our development flow stays uninterrupted. The HTTP transport listens on port 8181 by default, and we run it as a background daemon to save time and avoid restarts.

Running the HTTP Transport Daemon

Start the daemon once and let it stay up. A long-lived mcp process keeps our tools responsive and reduces friction during every coding session.

- Background daemon: ensures the server is always available without manual restarts.

- Port 8181: the default HTTP transport keeps a stable connection for faster requests.

- Access to files and context: agents can fetch needed context any time during a session.

- Reliable architecture: mcp gives us a standard interface to interact with local data and index services.

- Monitoring: we watch the server status so our index stays accessible and our qmd-based tools run smoothly.

Proper configuration of the mcp server is the key to seamless interaction between local data and our AI agents. When the server is steady, we save time and stay focused on real work.

Maintaining Your Index and Performance

Keeping our local index lean and current saves us time when a session needs quick facts.

We store the index at ~/.cache/qmd/index.sqlite. The system chunks documents into 900-token segments with a 15% overlap. This smart chunking improves retrieval and keeps results focused.

Regular updates matter. We run periodic re-indexing and regenerate embeddings so vector search stays accurate as the collection grows.

- Use Bun to schedule fast index updates that sync new files and notes.

- Keep the server running during work hours so the mcp endpoint answers fast.

- Regenerate embeddings on a schedule to prevent drift in semantic matches.

| Task | Frequency | Why it matters | Impact |

|---|---|---|---|

| Index update | Daily or on-save | Reflects latest files and notes | Faster, current search results |

| Embedding regen | Weekly | Maintains vector accuracy as collection grows | Better semantic matches |

| Server health check | Hourly | Ensures mcp responds to queries | Reliable session performance |

| Chunking review | Monthly | Validate 900-token chunks and 15% overlap | Optimal retrieval speed |

Maintaining this routine prevents performance drops and keeps our keyword and vector searches sharp. For deeper design notes on agent memory, see our write-up on memory architecture. For tools that help monitor and tune search, check SEO optimization tools.

Elevating Your Productivity with Local Search

Local, fast search turns scattered markdown and transcripts into instant answers that keep our team moving.

We let every user run a keyword search or a vector query so questions get solved without digging through files. Our qmd mcp setup ties the index into agent flows and the claude code skill, so context is available when an agent needs it.

Combining BM25, vector similarity, and LLM reranking keeps results precise at scale. These tools shrink friction in daily work and help us stay focused on building features, not hunting for facts.

Learn the setup, practice the commands, and your team will see faster answers and fewer interruptions.